Deployment as a Bottleneck: When AI Codes Faster Than You Can Deploy

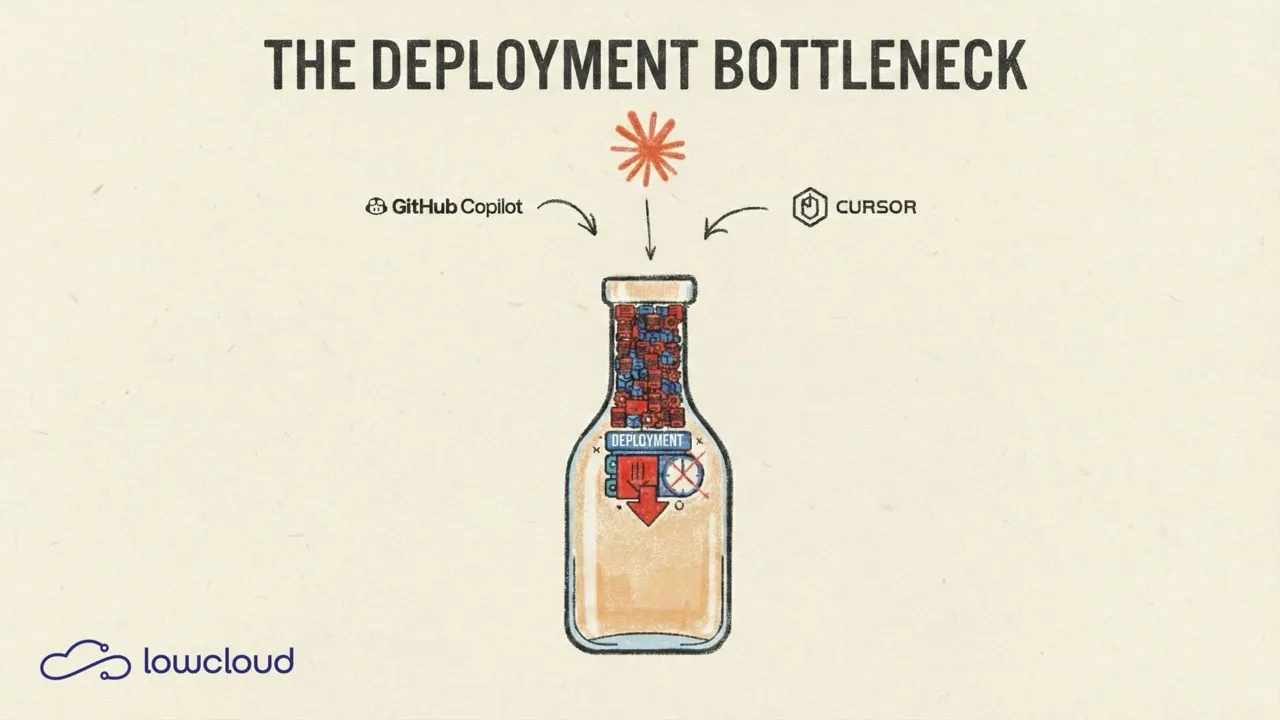

AI tools like GitHub Copilot, Cursor, and Claude have fundamentally changed how developers write code. Features that once took days are now built in mere hours. Yet, while code production has surged to an entirely new level, a critical part of the software delivery process is lagging behind: deployment. If your team is blazing fast at coding but still painfully slow to ship, you haven't eliminated the bottleneck—you've just shifted it further down the pipeline.

The New Bottleneck in Software Development

What has changed because of AI?

AI-assisted development tools are no longer science fiction. According to a 2023 study by GitHub, developers using Copilot are up to 55% faster at completing coding tasks. In practice, this means producing more code, shipping more features, and opening more pull requests in far less time.

At first glance, this sounds like a massive win. However, as code velocity spikes, unprecedented pressure hits your downstream processes. Tests must execute faster. Code review processes have to scale.

And most importantly: Deployments must be incredibly fast, highly reliable, and easily reproducible.

Why deployment hasn't kept pace

Deployment infrastructures rarely scale organically alongside development speed. Many teams still rely on pipelines built years ago—designed for an era when deploying once a week was considered the gold standard. Manual approvals, fragile handwritten bash scripts, and non-existent rollback mechanisms — classic symptoms of the most common DevOps problems in SMBs — were somewhat tolerable back when the flow of new code was slow and manageable.

In a world where AI multiplies code production by an order of magnitude, these exact weaknesses turn into critical bottlenecks.

What Turns Deployment Into a Bottleneck?

Manual steps and lack of automation

The most common culprit behind sluggish deployments is manual intervention. A developer creates a pull request, waits for a code review, waits for staging approval, and finally waits for the Ops team to kick off the production deployment. Every single hand-off burns time. Add them all up, and you're looking at hours or even days of waiting to ship code that took mere minutes to write.

Automation is the absolute key here — and the biggest lever for cutting IT costs. A comprehensive CI/CD pipeline, running from the initial commit all the way to deployment, reduces manual touchpoints to an absolute minimum. It actively ensures that deployments remain consistent and reproducible.

Environment conflicts and configuration chaos

"It works on my machine" is an industry-wide punchline. But "It works on staging, but not in production" is a terrifying, daily reality for many.

Different environment variables, mismatched dependency versions, and unversioned configurations all lead to deployment failures that are incredibly frustrating to track down and debug.

Tools like Docker and Kubernetes were built to tackle this directly through containerization and declarative infrastructure. However, they inevitably introduce their own steep learning curves and massive operational complexity.

The Kubernetes complexity trap

Kubernetes is the undisputed de-facto standard for container orchestration. At the same time, it is easily one of the most complex platforms in the modern software stack. Running your own bare-metal Kubernetes cluster means wrestling with endless YAML manifests, Ingress controllers, complex RBAC rules, Storage Classes, network policies, and a sprawling ecosystem of operators.

For many development teams, this extreme complexity is a massive hurdle. Every hour spent maintaining infrastructure is an hour stolen from actual product development. Here, the deployment bottleneck isn't caused by a lack of proper tooling—it's caused by the overwhelming operational weight of the platform itself.

DORA Metrics: Where Your Bottleneck Becomes Measurable

The DevOps Research and Assessment (DORA) program has defined four highly critical metrics to objectively measure the performance of any software delivery process:

- Deployment Frequency: How often do you successfully deploy to production?

- Lead Time for Changes: How long does it actually take from committing code to running it in production?

- Change Failure Rate: How often do your deployments directly cause service degradation or failures?

- Mean Time to Restore (MTTR): How fast can you recover when a deployment inevitably breaks something?

According to DORA, elite development teams deploy multiple times a day. They maintain a lead time of less than one hour, and they recover from incidents just as fast. If your team falls significantly behind these benchmarks, the signal is clear: your deployment process is the anchor dragging down your overall velocity.

Strategies to Unblock Your Deployments

Adopt GitOps workflows

GitOps is a modern approach where Git serves as the single source of truth for your entire infrastructure and application state. Every single change, whether it's raw code or configuration tweaking, is tracked via pull requests and automatically synced into the target environment via an automated pipeline.

Tools like Argo CD or Flux implement this exact principle brilliantly for Kubernetes environments. The result is fully automated, highly auditable deployments. Manual interventions are practically eliminated, and rolling back is as simple as running a git revert.

Modernize your CI/CD pipelines

A truly modern CI/CD pipeline goes far beyond simply building and pushing a Docker image. It must heavily integrate:

- Automated Testing (Unit, Integration, End-to-End)

- Security Scanning (Container images, specific dependencies)

- Canary Deployments or Blue-Green Deployments for highly controlled, low-risk rollouts — see our SMB deployment guide for practical rollout strategies

- Automated Rollbacks triggered by failing health checks

Upgrading your pipeline to this standard doesn't just clear up the deployment frequency bottleneck. It radically drops your change failure rate.

Leverage PaaS platforms to abstract complexity

Not every engineering team has the bandwidth or dedicated headcount to operate and optimize a full-blown Kubernetes infrastructure from scratch. This is exactly where Platform-as-a-Service solutions (PaaS) shine. They abstract the daunting complexity of Kubernetes away, giving development teams a straightforward, self-serve interface to handle deployments effortlessly.

Instead of meticulously maintaining YAML files and manually tinkering with Kubernetes resources, teams can deploy code straight out of their Git repository. Automated pipelines, pre-configured staging environments, and fully integrated monitoring are available out-of-the-box.

How a Modern PaaS Liberates Your Deployments

A modern, cloud-native Kubernetes PaaS platform like lowcloud aggressively attacks the deployment bottleneck from multiple angles at once:

Self-Service Deployments: Developers gain the exact tools to deploy their own applications completely independently. Waiting for a dedicated Ops engineer is entirely removed from the workflow.

Automated Pipelines: A simple Git push automatically translates into a full deployment. The build, test, and rollout phases happen automatically. Achieving lead times of under an hour becomes a realistic standard for the entire team.

Kubernetes Without the Headache: The entire underlying Kubernetes infrastructure is fully managed by the platform. The team directs 100% of their focus purely on their application. Cluster upgrades, detailed node configurations, and obscure network policies are securely handled in the background.

Integrated Observability and Automated Rollbacks: If a rollout goes sideways, an automatic rollback is triggered instantly. Detailed metrics and system logs are natively available within the platform, removing the strict need to stitch together an external observability stack.

The results are obvious: Workflows that used to be dragged down by multi-hour processes are now executing flawlessly several times a day. You get higher security, vastly better reliability, and practically zero manual heavy lifting.

What's Next: AI in the Deployment Process?

If AI has drastically accelerated how we write code, the next logical question to ask is: Will AI take control of the deployment process too?

The answer is yes. The very first concrete approaches are already proving their massive value in production:

- Automated Test Generation: AI doesn't just generate the application code; it writes the accompanying test suites. This drastically improves pipeline quality right out of the gate.

- Intelligent Rollbacks: AI models detecting microscopic anomalies in system metrics can automatically trigger a clean rollback before a single user ever realizes there's a bug.

- Predictive Scaling: AI-driven auto-scalers analyze complex traffic patterns and proactively scale up infrastructure ahead of spikes, rather than reacting only after the system starts choking.

- AI-Assisted Incident Diagnostics: Specialized tools read through massive piles of logs and metrics to instantly suggest highly accurate fixes during outages.

However, the golden rule remains: AI acts as a phenomenal amplifier. It does not replace the fundamental need for a rock-solid deployment platform.

Even the most brilliant AI requires an extremely stable, highly reproducible foundation to operate on:

- It demands standardized, predictable deployments, not fragile, hand-cranked pipelines.

- It heavily requires clean, strict isolation between Dev, Staging, and Production environments.

- It needs absolute guardrails enforced through strict policies, pristine secrets handling, and deep audit logs.

- It fundamentally relies on flawless observability (Logs, Metrics, Traces) to make any intelligent decisions.

This is precisely where lowcloud enters the equation. It delivers exactly this highly stable, sovereign foundation for Kubernetes deployments. The self-service focus, robust CI/CD principles, and clean monitoring capabilities make executing complex—or even AI-driven—rollouts an incredibly safe, reliable reality.

The future of software delivery is undoubtedly automated. But it's also going to be highly intelligent.

That specifically demands a platform totally capable of absorbing extreme complexity while guaranteeing iron-clad security. Only with those foundations will AI provide not just faster code, but also profoundly safer, highly controlled deployments.

The Bottom Line

Nobody debates that AI has dramatically sped up the creation of code. But if you want to harness the full, explosive power of that acceleration, you need to modernize your deployment process. Legacy, manual deployment workflows are officially the most glaring bottleneck in the modern software delivery cycle.

The phenomenal news is that you don't need to rebuild the wheel. The tools and battle-tested best practices to smash this bottleneck are already available. Modern CI/CD pipelines, GitOps workflows, and intelligent Kubernetes PaaS approaches allow teams of any size to ship code incredibly fast and perfectly safely. It all works today, without forcing you to carry the crushing operational overhead of running a bare-metal Kubernetes cluster.

lowcloud hands you exactly this leverage. Zero operational pain, entirely self-serve, and blazing fast. Your code should reach your users exactly as fast as AI helps you write it. Learn more about lowcloud and securely roll out your first robust deployment today.