Zero-Config Kubernetes: Why Simplicity Wins

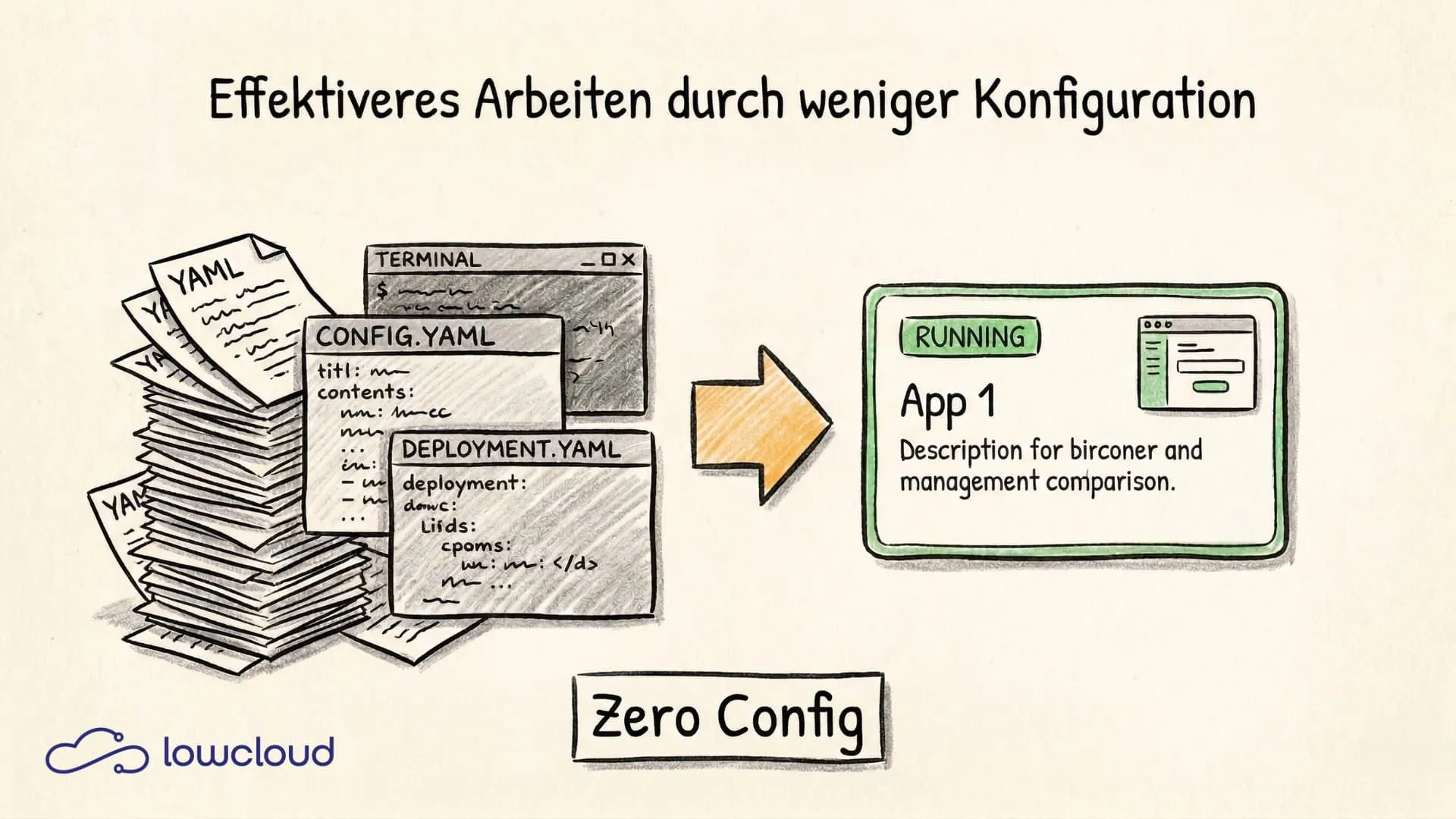

Kubernetes can do almost anything — but that sentence has a catch. The ability to do almost anything also means you have to configure almost everything yourself. For many teams, this isn't a theoretical problem but a practical one that costs hours every day and delays deployments. Zero-configuration isn't giving up control — it's a design decision that says: sensible defaults are better than forced freedom.

What Zero-Configuration Actually Means

The term originally comes from web development. Ruby on Rails popularized it, Maven adopted it for Java builds, and Webpack eventually forgot about it — which came back to bite them. The core idea is simple: a system should work without or with minimal configuration when covering the most common use case.

For infrastructure and Kubernetes, this means: a platform that knows what a typical deployment looks like shouldn't require every team to translate that knowledge from scratch into YAML. Network configuration, resource limits, health checks, TLS termination — these aren't individual decisions for each project. In 80% of cases, the requirements are identical.

Zero-configuration doesn't mean you can't configure anything. It means you don't have to in order to get started. The reasoning behind this approach is grounded in minimalist cloud architecture -- the principle that every component must justify its maintenance cost.

How Much Configuration Overhead Kubernetes Really Causes

Anyone who has set up Kubernetes themselves knows the feeling. You start deploying a simple application and end up with a stack of YAML files: Deployment, Service, Ingress, ConfigMap, Secret, HorizontalPodAutoscaler, NetworkPolicy. For an app that needs to run in three environments, this multiplies quickly.

This isn't a criticism of Kubernetes as a system. It's a powerful tool built for complex, scalable infrastructure. But this tool treats configuration as the explicit responsibility of the user — for every detail, in every environment, for every team.

What emerges isn't malice but configuration drift: every team develops its own conventions, its own templates, its own workarounds. After two years, you don't have one Kubernetes platform — you have ten slightly different Kubernetes setups that all claim to do the same thing.

The real effort isn't just in the initial setup. It's in ongoing operations: onboarding new developers, keeping configurations up to date, debugging errors that stem from inconsistent setups.

Convention over Configuration — An Old Principle Cloud Native Needs Again

The principle is older than Kubernetes. Martin Fowler described it, Rails made it famous: a framework should make decisions for the developer as long as those decisions correctly cover the most common use cases. The developer only needs to intervene when they want to deviate from the default behavior.

In the Kubernetes world, this thinking has struggled to take hold. Kubernetes is deliberately designed as a platform for platforms — it offers primitives, not opinions. This makes sense at the orchestrator level itself. But the layer above it, the one developers use daily, has been missing for a long time.

What has changed: platforms like Vercel, Railway, and Render have shown that developers are willing to give up control when the defaults are right. The growth of these platforms is no coincidence — it's a signal.

What Makes Good Defaults

Not every default is a good default. Bad defaults are those that break at scale, open security vulnerabilities, or are so restrictive that they need to be changed at the first real requirement.

Good defaults have three properties:

Security: The default state should be the secure state. A pod without explicit SecurityContext settings shouldn't run as root. TLS should be enabled by default. Network access should be restricted by default, not open.

Functionality: The default must work. A health check that's configured by default but points to a non-existent endpoint is worse than no health check at all.

Customizability: When someone needs to deviate from the default, it should be possible — without leaving the entire abstraction model. Zero-configuration means easy getting-started, not a prison.

Zero-Configuration Kubernetes in Practice

Managed Kubernetes services like GKE, EKS, or AKS take some of the burden off. They manage the control plane, updates, and underlying infrastructure. But the application layer — how deployments are structured, how networking works, how environments are separated — remains the team's responsibility.

PaaS layers on Kubernetes go a step further. They abstract Kubernetes primitives behind a higher-level interface: instead of a Deployment, there's an App. Instead of an Ingress object, there's a Domain. Instead of an HPA, there's a scaling policy in readable form.

DevOps-as-a-Service platforms often go even further: they don't just provide the abstraction but also take over operations, monitoring, security baselines, and incident handling as a service, allowing teams to focus even more on product development. All without vendor lock-in, as is often the case with traditional PaaS solutions.

The result: a developer can deploy an app without ever having seen a YAML file. A DevOps engineer can define infrastructure standards centrally that all teams automatically follow.

When Zero-Config Hits Its Limits

No approach fits every context. Zero-configuration at the PaaS level has limits you should be aware of.

Highly specific infrastructure: Teams with GPU workloads, specialized network architectures, or bare-metal requirements will quickly hit the limits of opinionated defaults.

Compliance-driven environments: Regulatory requirements can demand very specific configurations that a generic default doesn't cover. Here you need a platform that offers both good defaults and fine-grained customizability.

Migration scenarios: Teams with complex, organically grown Kubernetes infrastructure can't switch to zero-config overnight. Migration requires a clear strategy.

These are real limitations, but they affect a significantly smaller portion of teams than the Kubernetes community sometimes assumes.

Developer Experience as a Business Decision

Time-to-deploy is a metric that's rarely measured yet tells almost everything about the health of a development process. How long does it take until a new developer has their first pull request in production? How many hours per week does an experienced team spend configuring infrastructure instead of building features?

These numbers aren't an abstract DX topic. They're direct productivity losses.

Configuration overhead is particularly expensive because it doesn't scale linearly. The more projects, teams, and environments a company has, the more configuration work accumulates. And because configuration is rarely documented, this knowledge is often tied to individuals — a risk that surfaces at the next personnel change.

The Hidden Costs of Kubernetes Complexity

The obvious cost factor is personnel: someone has to understand, build, and maintain the infrastructure. But there are subtler costs.

Error sources from complexity: Every configuration line is a potential source of errors. A misconfigured resource limit, a missing network policy rule, a misconfigured liveness probe. These errors are hard to debug and expensive in production.

Onboarding overhead: A new developer who needs three weeks to understand the infrastructure before becoming productive is a real cost factor. Zero-configuration platforms reduce this overhead dramatically.

Cognitive load: Developers who have to think about business logic and Kubernetes internals at the same time make worse decisions in both areas. Focus is a limited resource.

Zero-Configuration Kubernetes with lowcloud

lowcloud addresses exactly this. As a Kubernetes DaaS platform, lowcloud comes with sensible defaults for security, networking, scaling, and monitoring — without requiring teams to define these themselves. Those who want to dig deeper can. Those who just want to deploy can do so right away.

The goal isn't to hide Kubernetes. It's to manage Kubernetes complexity where it belongs — at the platform level — instead of distributing it across every development team.

If your team wants to spend more time on product development and less on infrastructure configuration, that's the starting point worth thinking about.

Bring Your Own Cloud: What the Model Means and Why It

BYOC is not just another cloud flavor — it is a fundamentally different software delivery model: the vendor deploys into your infrastructure. What that means technically and who needs it.

Minimalist Cloud Architecture: Why Less Complexity Means More Stability

Why fewer components in your cloud infrastructure lead to greater stability – and how teams can deliberately reduce Kubernetes complexity.